Metascience

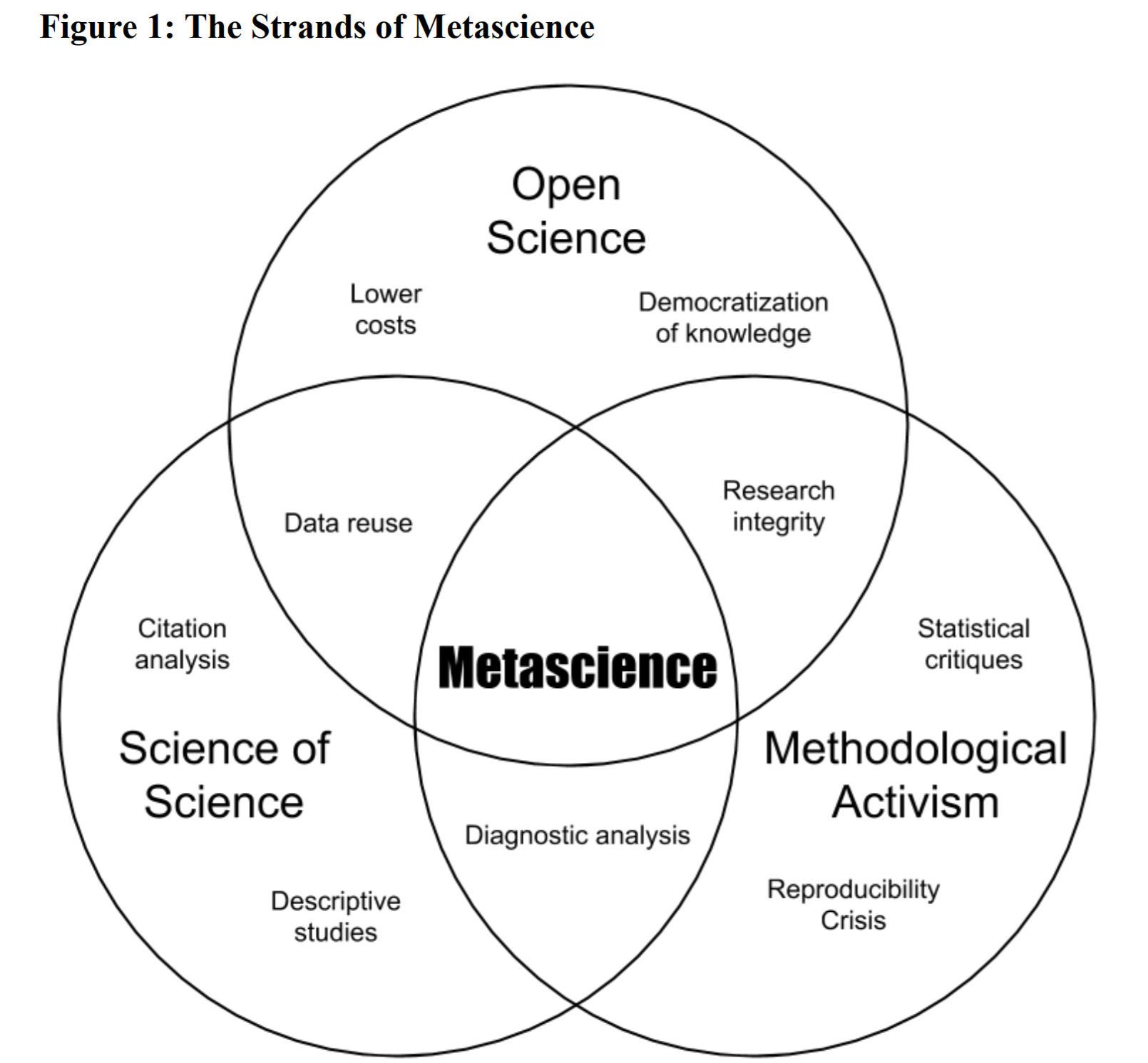

"Metascience is the use of scientific methodology to study science itself. Metascience seeks to increase the quality of scientific research while reducing inefficiency."

Metascience is the study of the methods and procedures used in scientific research. It is concerned with the identification and characterization of the basic elements of scientific inquiry, and with the ways in which these elements interact to produce reliable knowledge.

Metascience is not primarily concerned with the interpretation or justification of scientific theories, as philosophy of science is. Rather, its focus is on the study of scientific methods and procedures, and on the ways in which they can be improved.

There are four main goals of metascience:

- To identify the basic elements of scientific inquiry

- To characterize the ways in which these elements interact

- To determine the conditions under which scientific knowledge is produced

- To develop ways of improving the quality and reliability of scientific research

Science Progress

Science productivity could be further improved. Focused Research Organizations and ideas like Private ARPA show one potential solution. This type of organization starts with a goal in mind and works backward to get there. The core idea is that sometimes scientific progress requires putting existing building blocks together with a bigger engineering effort to realize them.

Reproducibility Crisis

The reproducibility crisis in science is a problem that has been increasing in recent years. Scientists are finding it harder and harder to reproduce the results of their experiments, and this is having a major impact on the progress of science.

There are a number of reasons for this crisis. One is that experiments are becoming more and more complex, making it harder to control all the variables. Another is that there is increasing pressure on scientists to publish their results, which can lead to them cutting corners in their experiments.

However, there are ways to solve this problem. One is to improve the way experiments are designed, so that they are more reproducible. Another is to provide more funding for replication studies, which are essential for confirming the results of experiments.

The reproducibility crisis in science is a serious problem, but it is one that we can solve. By taking steps to improve the design of experiments and to fund replication studies, we can ensure that science progresses in a robust and reliable way.

Some foundational research might be fundamentally flawed or outright fabricated, which is why we progress in ensuring important, foundational science successfully reproduces might be fundamental to overall scientific progress.

- Replication crisis Wikipedia Page

- “A neuroscience image sleuth finds signs of fabrication in scores of Alzheimer’s articles, threatening a reigning theory of the disease”

- “1,500 scientists lift the lid on reproducibility” by Nature

- “Is science really facing a reproducibility crisis, and do we need it to?

- “Science has been in a “replication crisis” for a decade. Have we learned anything?” by Vox

- No raw data, no science: another possible source of the reproducibility crisis

Vision for Metascience by Kanjun and Michael Nielsen

The social processes of science can be changed in many ways. Some examples of ways to change the social processes of science include:

- Improving peer review

- Changing how grants are awarded

- Selecting people differently to become scientists

- Creating new research institutions

- Decentralizing change

- Aligning change with what is best for science and humanity

- Creating a more structurally diverse ecosystem for doing science

Ideas

- Fund-by-variance: instead of funding grants that get the highest average score from reviewers, a funder should use the variance (or kurtosis or some similar measurement of disagreement) in reviewer scores as a primary signal: only fund things that are highly polarizing (some people love it, some people hate it).

- Encourage scientists to take on more high-risk projects.

- Encourage scientists to be more open about their work, including sharing data and code.

- Fund projects for 100 years instead of the conventional 5- or 10-year timeframe.

- Offer tenure insurance to encourage scientists to take risks.

- Conduct failure audits to identify which high-risk projects are most likely to succeed.

- Acquire private research institutes instead of letting them fail or change.

- Create an open source institute to produce understanding in the form of open source software and open protocols.

- Institute a traveling scientists program to allow scientists to work in different parts of the world.

- Purchase insurance premiums against unlikely but transformative possibilities in science.

- Maintain a public hall of shame of research funding opportunities where funders said no

- Set up an interdisciplinary institute which requires people to work together from at least two different disciplines.

- Make it easier for scientists to get access to data.

- Encourage scientists to blog about their work, or use social media to communicate their work.

- Require that all scientific papers be made available for free online.

- Do science cause prioritisation research to inform which research is worth funding for the highest potential impact”..

Applied positive meta-science by José Ricon; Summary

- Finding more building blocks: better scientific tools, models, datasets etc.

- Tools for (scientific) thought: better tools for all steps of science

- Time is all you need? Freeing up scientists time from grants, administrative and manual work etc.

- Various proposals to improve science: Software, Tooling, New Institutions, Funding mechanisms, Activities and norms

Outcome Graphs

The Outcomes Graph is a knowledge base that logs market and scientific research findings and points to the optimum path toward applying science to societal outcomes. The system is designed to recognise the important nodes and relationships in order to characterise outcomes with precision and granularity.

The Outcomes graph is a tool for representing the state of the applied knowledge frontier, gauging critical pathways and bottlenecks, and finding opportunities to move the frontier forward through venture creation.

The Outcomes graph is a way of representing knowledge that is composed of nodes (outcomes) and the relationships between them. These relationships can be logical (e.g. a constraint enables a solution) or dynamic (e.g. the AND/OR operators between outcomes).

- Have evidenced discussions: We want to know the state of the relevant pieces of knowledge that inform our arguments, how strong this evidence is, what data supports it, and what other pieces of knowledge the data could be related to.

The Outcomes graph can be used to identify optimal paths to achieving high-impact ventures, by understanding the Necessity and Sufficiency of outcomes.

The Outcomes graph can also be used to discover opportunities for combinatorial innovation, by identifying possible combinations of knowledge that have a high probability of achieving outcomes across completely unrelated knowledge silos.

Discourse Graphs

In order to make progress in science, it is important for scientists to synthesize and integrate existing knowledge about a scientific problem in order to generate new insights. Synthesis can take many forms, such as a theory, a literature review, or a research proposal, and can be a powerful tool for choosing effective studies and operationalizations. Synthesis is particularly important for tackling problems that cannot be addressed through decisive experimental tests, and may be necessary for scientific progress to be possible at all. An example of the power of synthesis in accelerating scientific progress is the work of Esther Duflo, who was awarded a Nobel Prize for her synthesis of problems in developmental economics.

Tech Trees

“While Civilization is just a game, the framework of tech trees can be helpful for thinking about scientific progress in the real world. Every technology can be seen through the lens of the foundational research that made it possible and the future discoveries it enables. However, there is one major difference between the game and reality: In the game, you can scroll to the end of the tech tree to decide whether going down a particular branch will pay dividends in the future. In the real world, the future is unknown, so it’s up to us to imagine new technologies.” – zackchiang.com/spatial-technologies-of-the-future/

Impact Certificates: HyperCerts

Hypercerts are a new primitive for public goods funding that enables retroactive funding. This is done by creating a data layer of impact claims that can be retrospectively funded by organizations or individuals. Hypercerts are agnostic about the mechanisms by which they are funded, and this enables experimentation with different funding models. Retroactive funding provides incentives for creators to take on public goods projects with a potentially high, but uncertain, impact. It also creates a more efficient market by back-propagating signals on what outcomes were impactful post-hoc.

Links

- A Vision of Metascience - An Engine of Improvement for the Social Processes of Science

- Shifting the impossible to the inevitable: A Private ARPA user manual by Ben Reinhardt

- Applied positive meta-science by José Ricon

- In what sense is the science of science a science? by Michael Nielsen

- New Science’s Report on the NIH by Matt Faherty

- Science funding essays by José Ricon

- Unblock research bottlenecks with non-profit start-ups ‘Focused research organizations’ can take on mid-scale projects that don’t get tackled by academia, venture capitalists or government labs. by Adam Marblestone et al., Nature

- How Should We Critique Research? by Gwern

- When should an idea that smells like research be a startup?

- What’s wrong with academia?

- The trouble in comparing different approaches to science funding by Michael Nielsen and Kanjun Qiu

- Working notes on the role of vision papers in basic science by Michael Nielsen

- Grants Only Go So Far by Ben Reinhardt

- Market Failures in Science

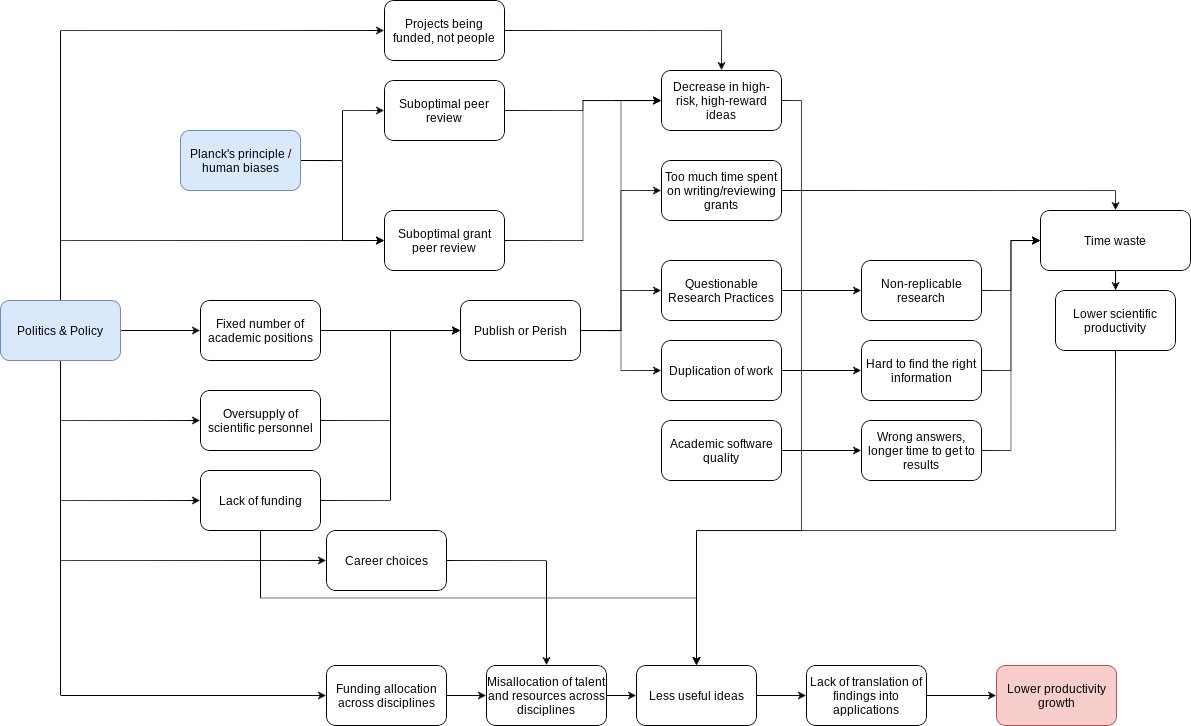

Science is broken visualization by José Ricon

”

José Ricons Appendix: A collection of various proposals to improve science

When I started writing this post I tried to think of some high level categories for all the “fix science” proposals. Some of these are discussed in earlier posts, see Science Funding.

Software

- Search tools

- Social annotation

- Reference managers

- ML models for replication

- ML for idea generation

Tooling

- Cloud labs (Strateos, ECL)

- Lab automation

- Spatial creativity (Dynamicland? Interior design/architecture to foster collaboration & serendipity, MIT Building 20)

New institutions

- PARPA

- FROs

- Prediction markets for replication

- Uber, but for technicians

- New journals

Funding mechanisms

- Lotteries

- Find the best, fund them

- Fast Grants

- Fund People, not Projects

- Alternative evaluation practices (deemphasise impact factor etc)

- Funding for younger/first time Pis

- Funding high disagreement research proposals

Norms

- Preregistration

- Replication

- Fund the right studies

- Mandatory retirement

- Cap on funding

- Better methods sections

- Open Science

Activities, norms

- Roadmapping

- Workshops

- Grants to strengthen studies

- Record videos

- Reviews

- Fighting data forging”